The world has tons of reports/research on performance with Gen AI tools.

It has even more industry use cases by the day.

We know that, when used intelligently, these tools can enhance performance (see this, this, and this). Plus, it’s clear that we see the biggest short-term ROI in the boring and basic tasks.

I’ve found in L&D (and, to be fair, many industries) that we get distracted. We focus so often on the ‘shiny thing’ that we continually miss the point.

If AI ‘does it for you’, what happens to your skills?

Although I like the power, potential, and continued promise of AI tools, I’m troubled by the unexpected consequences of the manic pursuit of ‘AI at all costs.’

Especially, AI’s impact on skills.

I sense that we already over-rely on certain tools, and in doing so, we both create illusions of capabilities and fail to invest in moments of intentional learning.

Granted, a lot of this comes down to the intent and ability of human users.

But with all-time high levels of use across millions of Gen AI tools, and all-time low levels of AI literacy, we could be heading for a skills car crash of our own design.

Let’s unpack that.

📌 Key Insights:

- Smart Gen AI use can expand skill capabilities for a limited time

- We aren’t improving skills in most cases, mastery requires more than AI alone

- The majority of people will over-rely on AI tools and become ‘de-skilled’

- AI tools can help us improve critical thinking processes

- We must be more intentional in how we approach skill-building in an age of ‘do this task for me’

- If AI ‘does it for you’, what happens to your skills?

- The Controversial Idea: Skills will be destroyed if we let AI do everything

- AI’s impact on skills: What we know today

- What happens to human skills if we over-rely on AI?

- AI is only as good as the human using it

- How to help humans use AI for REAL learning

- Modern ways to reshape skill-building with AI

- Final thoughts

The Controversial Idea: Skills will be destroyed if we let AI do everything

So many people are scared that AI will take their job.

They think they’ll lose because AI tools can do the tasks better.

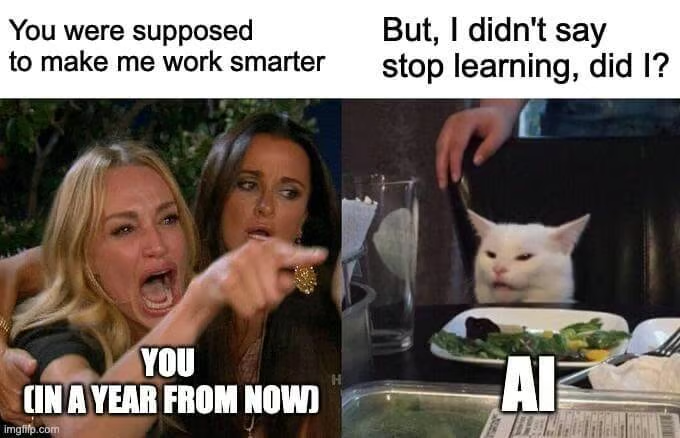

But what if you lose, not because AI does your job better, but because you over-relied on the temporary power it grants? You’d be the master of your own demise.

It’s easy to think, “That will never happen to me.”

Maybe it won’t.

But I’d ask you to consider your use of AI tools today. My assumption is that most people use them in a ‘do this thing for me’ approach, rather than a “show me how to do this.”

Here exists a problem we aren’t paying enough attention to.

An AI-first approach will damage the capabilities and potential of skills (if you allow it).

Somewhat an observation for now, but a dark reality I’d like to avoid.

My thinking behind this comes both from real-world experience with consulting clients on Gen AI skills programs, and what I’ve seen in more advanced research this year.

An excellent piece of research from Boston Consulting Group has been one of my favourites on this topic. It unpacks, with an experiment involving 480 of their consultants, that Gen AI can increase productivity and expand capabilities.

That’s the headline, of course.

AI’s impact on skills: What we know today

The problem with most research and reports is that most don’t read beyond the headline.

Hence why we have so many cult-like statements about Gen AI’s endless power. It is powerful, in the right hands. But any power comes at a cost.

For those willing to go deeper, we find both a bundle of exciting opportunities and critical challenges.

Here’s some we haven’t discussed ↓

1/ Gen AI grants short-term superpowers

No surprise here, I think.

Gen AI tools grant easy access to skills we don’t possess. They can amplify the level of our current skills too. The BCG team coins this as an ‘exoskeleton’ effect.

Explained in their own words:

“We should consider generative AI as an exoskeleton: a tool that empowers workers to perform better and do more than either the human or Gen AI can on their own.”

Being a nerd, I compare this to something like Iron Man.

For those not familiar with the never-ending Marvel films, Tony Stark is a character who has no superpowers (but is a highly intelligent human). To play in the realm of superheroes, he creates his own suit of armour that gives him access to incredible capabilities he doesn’t have as a human.

The caveat is that he needs the suit to do those things.

Essentially, using an AI tool is like being given a superpower you can use only for 20 minutes. It exponentially increases your abilities, but without it, you go back to your normal state. And everyone has the same access to this power.

BCG found the same in this research.

We could call this a somewhat false confidence dilemma.

This presents a few challenges to navigate:

- How do we combat the illusion of expertise?

- What happens when you don’t have access to AI?

- How do we stop addiction to the ‘easy option’?

Spoiler: I don’t have all the answers.

However, this temporary boost in abilities often leads to another problem – the illusion of expertise.

2/ We have to fight the illusion of expertise

This is a big challenge for us.

Getting people to look beyond AI’s illusion of expertise.

You know what I’m talking about.

Now everyone has access to creation tools, they all think they’re learning designers who can create their own amazing products. We both know how that’s going to turn out.

As an example in my work, I can build a decent website with AI, which does the heavy coding for me. But I can’t do it without it, not unless I learn how to do it.

Yes, I built x, but I’m not a software engineer.

There’s a big difference, and sadly, I see people falling into this trap already.

Now, with all this new tech, I don’t need to know the ‘why’ or ‘how’ behind something being built by AI.

But what does this mean for my skills?

A big part of skill acquisition focuses on the ‘why’ and ‘what’, in my opinion. I don’t need to know every little detail, but it helps to have a basic understanding.

I see a few unintended consequences if we don’t clearly define what is a ‘short-term expansion enabled by tech’ and what is ‘true skill acquisition’:

- We over-rely on AI tools, and this over-reliance erodes critical thinking skills, a key element in real-world problem-solving

- We lose context, a sense of understanding

- Our human reasoning skills will erode in the face of “But AI can tell me”

Each of us will fall into different groups based on our motivations.

I’m not saying we’re all collectively going to become skill-less zombies addicted to a digital crack of Gen AI tools, but it will be a reality for some.

What happens to human skills if we over-rely on AI?

This is a real grey area for me.

I’ve seen countless examples where too much AI support leads to less flexing of human skills (most notably common sense), and I’ve seen examples where human skills have improved.

In my own practice, my critical thinking skills have improved with weekly AI use these last two years. It’s what I class as an unexpected but welcome benefit.

This doesn’t happen for all, though.

It depends on the person, of course.

BCG’s findings seem to affirm my thoughts that the default will be to over-rely. I mean, why wouldn’t you? This is why any AI skills program you’re building must focus on behaviours and mindset, not just ‘using a tool.’

You can only make smart decisions if you know when, why, and how to work with AI.

But we can learn with AI too

I (probably like some of you) have beef with traditional testing in educational systems.

It’s a memory game, rather than “Do you know how to think about and break down x problem to find the right answer?” Annoying! We celebrate memory, not thinking (bizarre world).

My beef aside, research shows partnering intelligently with AI could change this.

This article between The Atlantic and Google, which focuses on “How AI is playing a central role in reshaping how we learn through Metacognition”, gives me hope.

The TL;DR (too long; didn’t read) of the article is that using AI tools can enhance metacognition, aka thinking about thinking, at a deeper level.

The idea is, as Ben Kornell, managing partner of the Common Sense Growth Fund, puts it, “In a world where AI can generate content at the push of a button, the real value lies in understanding how to direct that process, how to critically evaluate the output, and how to refine one’s own thinking based on those interactions.”

In other words, AI could shift us to prize ‘thinking’ over ‘building alone.’

And that’s going to be an important thing in a land of ‘do it for me.’

To truly do so, you must know

Google’s experiments included two learning-focused examples.

In the first example, pharmacy students interacted with an AI-powered simulation of a distressed patient demanding answers about their medication.

- The simulation is designed to help students hone communication skills for challenging patient interactions.

- The key is not the simulation itself, but the metacognitive reflection that follows.

- Students are encouraged to analyse their approach: what worked, what could have been done differently, and how their communication style affected the patient’s response.

The second example asks students to create their own chatbot.

Strangely, I used the same exercise in my recent “AI For Business Bootcamp” with 12 students.

Obviously, great minds think alike 😉.

It’s never been easier for the everyday human to create AI-powered tools with no-code platforms.

Yet, you and I both know, that easy doesn’t mean simple. I’m sure you’ve seen the mountain of dumb headlines with someone saying we don’t need marketers/sales/learning designers because we can do it all in ‘x’ tool.

Ha ha ha ha is what I say to them.

Clicking a button that says ‘create’ with one sentence doesn’t mean anything.

To demonstrate this to my students, we spent 3 hours in an “AI Assistant Hackathon.” This involved the design, build, and delivery of a working assistant.

What they didn’t know is I wasn’t expecting them to build a product that worked.

Not well, anyway.

I spent the first 20 minutes explaining that creating a ‘good’ assistant has nothing to do with what tool you build it in and everything to do with how you design it.

Social media will try to convince you that all it takes is 10 minutes to build a chatbot.

While that’s true from a tech perspective, the product, and its performance, will suck.

Just because you can, doesn’t mean you will (not without effort!)

When the students completed the hackathon, one thing became clear.

It’s not as simple or easy to create a high-quality product, and you’re certainly not going to do it in minutes.

But, like I said, the activity’s goal was not to actually build an assistant, but rather, to understand how to think deeply about ‘what it takes’ to build a meaningful product.

I’m talking about:

- Understanding the problem you’re solving

- Why it matters to the user

- Why the solution needs to be AI-powered

- How the product will work (this covers the user experience and interface)

Most students didn’t complete the assistant/chatbot build, and that’s perfect.

It’s perfect because they learned, through real practice, that it takes time and a lot of deep thinking to build a meaningful product.

“It’s not about whether AI helped write an essay, but about how students directed the AI, how they explained their thought process, and how they refined their approach based on AI feedback. These metacognitive skills are becoming the new metrics of learning.”

Shantanu Sinha, Vice President and General Manager of Google for Education

AI is only as good as the human using it

The section title says it all.

Perhaps the greatest ‘mistake’ made in all this AI excitement is forgetting the key ingredient for real success.

And that’s you and me, friend.

Like any tool, it only works in the hands of a competent and informed user.

I learned this fairly young when a power drill was thrust into my hands for a DIY mission. Always read the instructions, folks (another story for another time).

Anyway, all my research and real-life experience with building AI skills has shown me one clear lesson.

You need human skills to unlock AI’s capabilities.

You won’t go far without a strong sense (and clarity) of thinking, and the analytical judgment to review outputs.

Going back to the BCG report, a few things to note that support this:

1/ Companies are confusing AI ‘augmenting with skill building’

As we touched on earlier, AI gives you temporary superpowers.

Together (you and AI) you can do wonderful things. Divided, not so much (unless you have the prerequisite knowledge to do the task).

We can already see both companies and workers confusing their abilities to (actually) perform a task.

AI gives both a false sense of skills, and terror at the lack of them.

2/ Most people can’t evaluate AI outputs

Again, any of us can code with AI.

But that doesn’t mean we know what’s going on or how to check if it’s correct.

This is the trap anyone can fall into. Knowing how to validate AI outputs is critical. We need to pay more attention to this. You know, thinking about thinking, and all that.

3/ Without context, you’re doomed

Content without context is worthless.

That’s a general rule. Exceptions apply at times. Nonetheless, you need the context of when and when not to use AI tools to get results.

As we know, it’s not a silver bullet.

The solution to this is getting a better understanding of Gen AI fundamentals.

Another BCG report, in collaboration with Harvard, discovered that success in work tasks with AI came down to knowing when is the right time to call on those superpowers.

How to help humans use AI for REAL learning

Ok, we can see a potential problem if left unchecked.

Here’s a few ideas, tools and actions to do something about it:

1/ Cover AI fundamentals

Too often ignored with people going straight to tools.

Yet, knowing how and why a technology works means you become the chess player, and not a chess piece that’s moved by every new model and tool.

The world has lots of resources to help you with this.

Here’s some from my locker:

- AI Explained: What it means and what it doesn’t

- Generative AI for humans

- The 5-step ‘make me AI confident’ learning strategy

- The difference between AI agents and assistants

2/ Don’t confuse ‘do it for me’ with ‘learning to do’

While AI can enable individuals to complete tasks they wouldn’t be able to do independently, this doesn’t automatically translate to skill acquisition.

Help people recognise the difference.

To truly learn anything, you need a combination of:

- Understanding key concepts

- Engage in practice

- Commitment to improve

3/ Nurture your Human Chain of Thought

I introduced this concept in last week’s edition.

You might have heard me say “AI is only as good as the human using it” like a broken record.

Like any tool, it only works in the hands of a competent and informed user.

I learned this fairly young when a power drill was thrust into my hands for a DIY mission. Always read the instructions, folks (another story for another time).

Anyway, all my research and real-life experience with building AI skills have shown me one clear lesson.

You need human skills to unlock AI’s full potential.

4/ Encourage critical thinking before and after using AI

Despite what social media gurus say, we all very much need to use our brains when working with AI.

If you want to do useful stuff, that is.

I’ve shared a system you can use to achieve this with all your AI interactions before. You’ll stand out from the digital zombies with this.

5/ Prompt an Engineer’s Mindset

BCG refers to this as the ‘engineer’s mindset’ as it originates from mostly engineering roles (both physical + digital).

I call it the ‘Builder’s mindset’, and I think this is a cheat code for life.

I would say I’m only as successful as I have been because of it. I learned it during my teenage years of coding in SQL and Java. It’s built around the principles of understanding what, why, and how of building anything.

Back in the day, I used it to build SQL-based reporting applications.

I didn’t even think about building the app before I knew more about the consumer.

Simple things like:

- Who are they?

- What problems are they having?

- Why are those problems happening?

- What would this look like if it were easier for them?

Over the years, I’ve adapted this into all my work, especially writing.

As of today, before I begin any work, I ask:

- Why am I building this?

- What problem is it solving?

- The ‘So What’ test?

- How will you build it?

I can only solve a problem or create a meaningful post/product/newsletter/video if I know the above.

Like a builder, you piece together an end goal.

When you reveal this, the next part is easy → Reverse engineer this process.

As this is such an important point, I need more than the written word to explain this.

So, here’s a short video where I explain how to use this framework:

Modern ways to reshape skill-building with AI

I’ve spoken a lot about AI coaches.

We can throw AI tutors into that mix, too.

Here’s how I see the difference btw:

- AI Tutor = Breaks down concepts and works in more of a professor style

- AI Coach = Works with you in a live environment to solve challenges together. Basically, the new “Learning in the flow” but with AI.

Of course, these terms are interchangeable, and the capabilities can be merged.

FYI, today’s NL partner, Sana, is doing a great job in this department with their soon-to-be-released AI tutor. You should check that out.

Often, I find it’s easier to show you what I’m talking about with AI than try to describe it to you, so here’s examples of both:

Using AI as a Tutor with Google AI Studio

Using AI as a Coach with Google AI Studio

In case you’re wondering, I use Google AI Studio to show these features because it’s easy to access for most people.

It’s a sandbox where you can experiment.

But you shouldn’t use this for work, just as a place to experiment. For Tutor and Coach tools in the workplace, more are entering the market.

Final thoughts

So, will AI destroy or amplify your skills?

Only if you let it.

This is by no means a closed book. No doubt, I’ll cover more on this as time goes on.

For now, be smart:

- Craft your builder’s mindset

- Borrow superpowers but build real ones through practice.

- AI is powerful and has great potential, but don’t forget the unique human and technical skills you need to be ‘fit for life.’

Before you go… 👋

If you like my writing and think “Hey, I’d like to hear more of what this guy has to say” then you’re in luck.

You can join me every Tuesday morning for more tools, templates and insights for the modern L&D pro in my weekly newsletter.